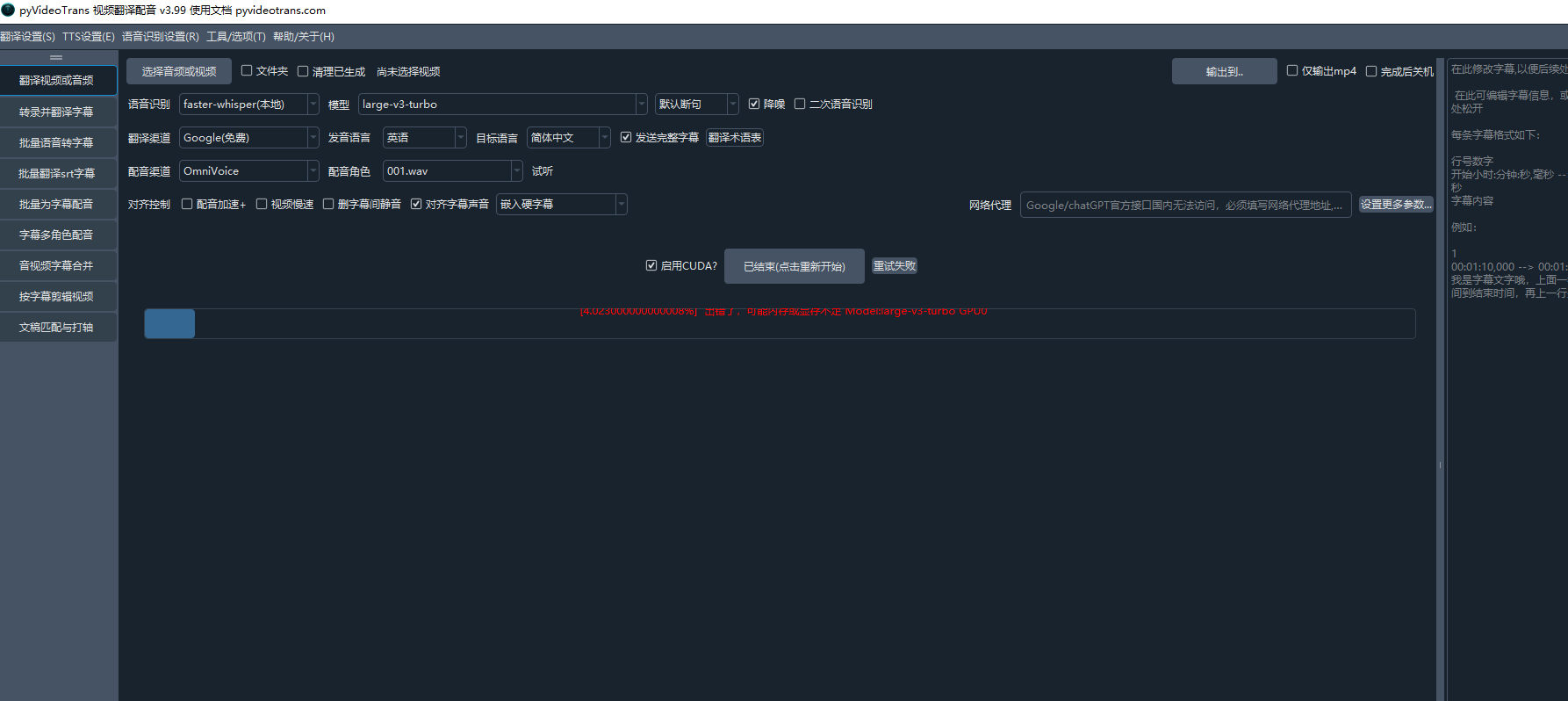

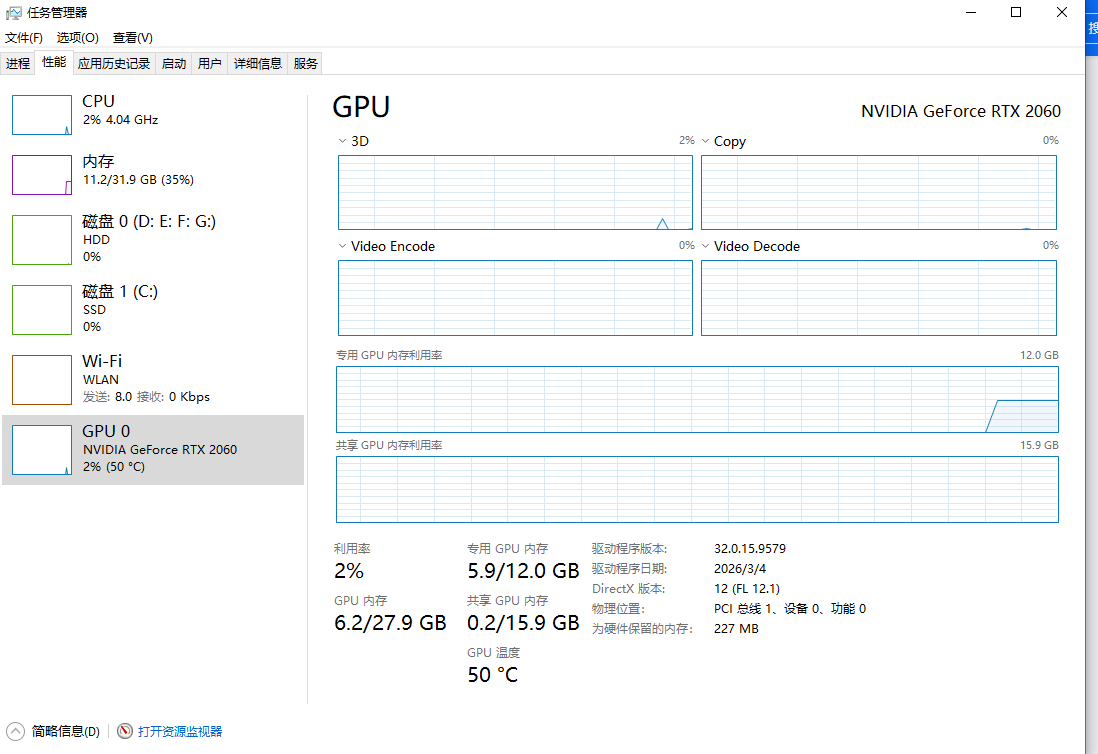

An error has occurred. There may be insufficient memory or video memory. [GPU0]

Traceback (most recent call last):

File "/media/charlie/Tub8/Vids/pyvideotrans/videotrans/configure/_base.py", line 285, in _new_process

_rs = future.result()File "/home/charlie/.local/share/uv/python/cpython-3.10.19-linux-x86_64-gnu/lib/python3.10/concurrent/futures/_base.py", line 458, in result

return self.__get_result()File "/home/charlie/.local/share/uv/python/cpython-3.10.19-linux-x86_64-gnu/lib/python3.10/concurrent/futures/_base.py", line 403, in __get_result

raise self._exceptionconcurrent.futures.process.BrokenProcessPool: A process in the process pool was terminated abruptly while the future was running or pending.

=

system:Linux-6.8.0-94-generic-x86_64-with-glibc2.35

version:v3.98

frozen:False

language:en

root_dir:/media/charlie/Tub8/Vids/pyvideotrans

Python: 3.10.19 (main, Feb 12 2026, 00:42:18) [Clang 21.1.4 ]